Note: I’m currently not aware of any PyTorch equivalent to tensorflow-metal to accelerate PyTorch code on Mac GPUs. You can see the code for all experiments and TensorFlow on Mac setup on GitHub. Whereas Google Colab and the Nvidia TITAN RTX used standard TensorFlow. The only difference was between Google Colab and the Nvidia TITAN RTX versus each of the Macs.Įach of the Macs ran a combination of tensorflow-macos (TensorFlow for Mac) and tensorflow-metal for GPU acceleration. So I was excited to see how the new machines would perform here.įor all of the custom TensorFlow tests, all machines ran the same code with the same datasets with the same environment setup. I teach TensorFlow and code it almost every day. Experiment 3: CIFAR10 TinyVGG Model with TensorFlow CodeĬreateML works fantastic but sometimes you’ll want to be making your own machine learning models.įor that, you’ll probably end up using a framework like TensorFlow. Judging by the performance, my guess is it would be using at least a pretrained ResNet50 model or EfficientNetB2 and above or similar.

Now CreateML doesn’t reveal what kind of model it uses either. However, this isn’t 100% confirmed since Activity Monitor doesn’t disclose when the Neural Engine kicks in. This leads me to believe that CreateML uses 16-core Neural Engine to accelerate training. Notably, the GPU didn’t get much usage at all during training or feature extraction. Perhaps this is the 16-core Neural Engine kicking in? Screenshot taken on M1 Pro. Style: 30x screen recordings of smaller videos (~10-minutes each) stitched togetherĭuring the training of a model in the CreateML app, CPU usage spikes to over 500%.

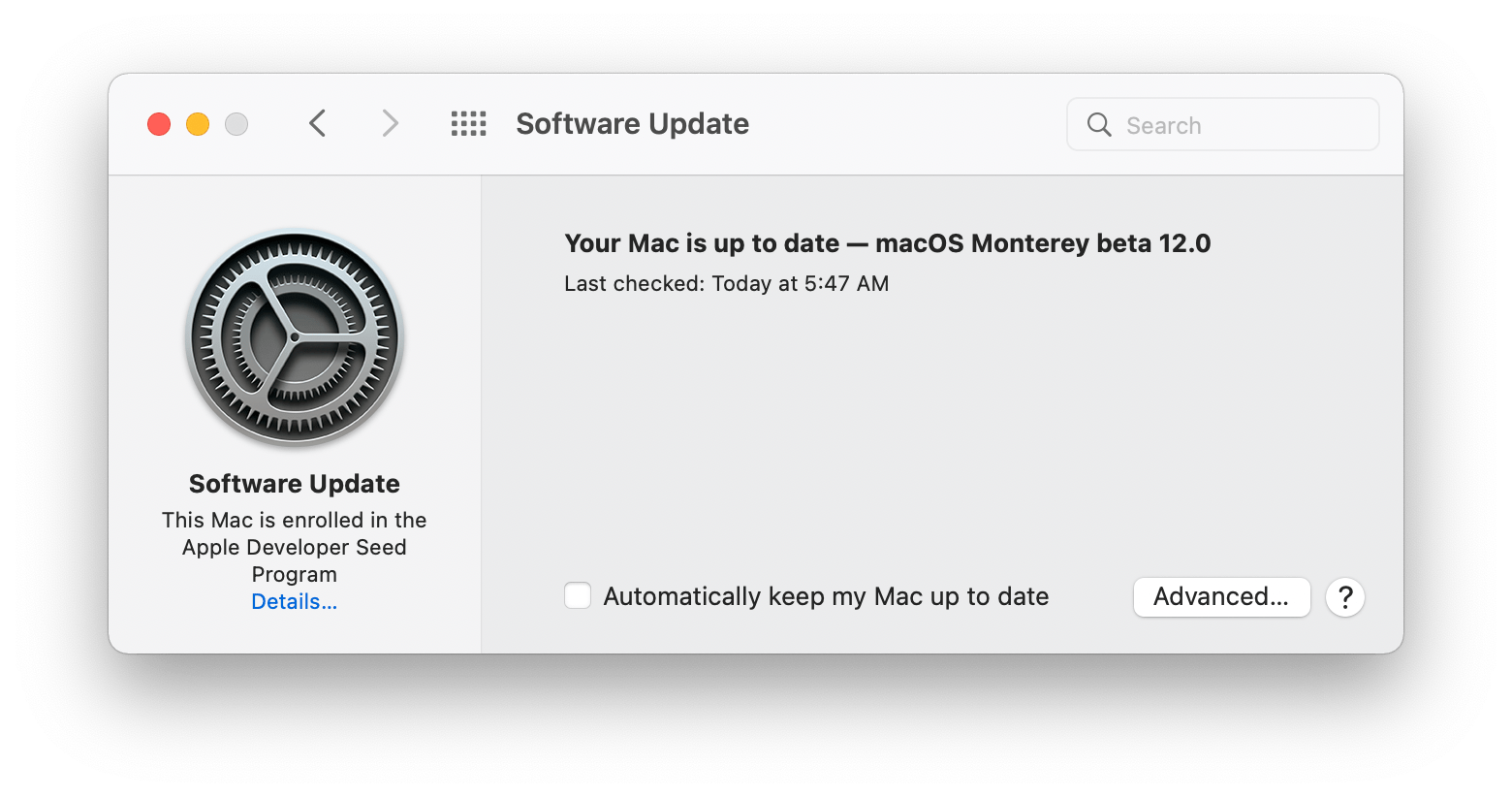

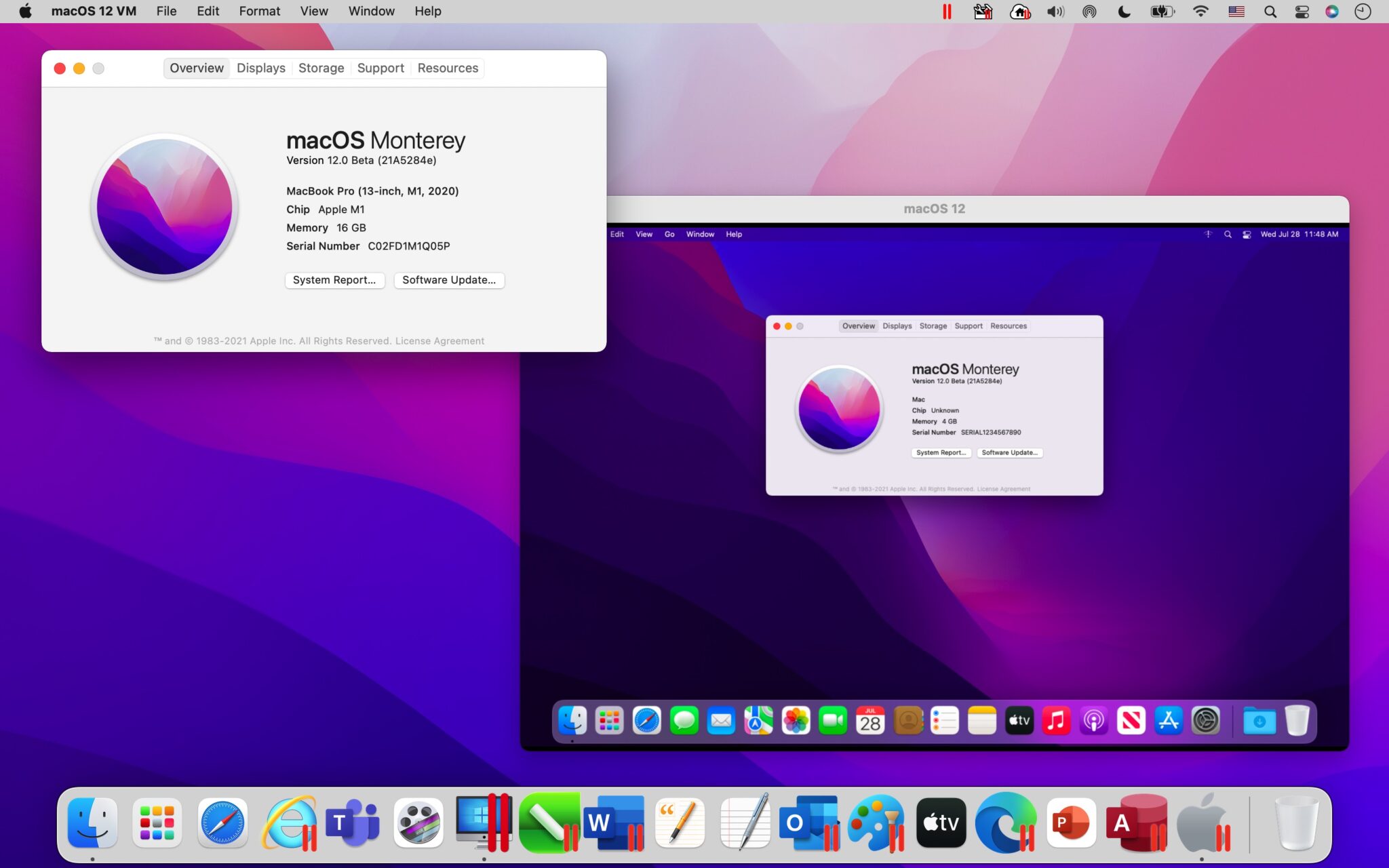

Video: Learn TensorFlow for Deep Learning Part 2.This is because of Apple’s statements that the newer M1 chips have dedicated ProRes engines. Many of which edit videos at far higher quality than I do (for now).įor each video, I exported them both to H.264 encoding (higher compression, more GPU intensive) and ProRes encoding (lower compression, less CPU and GPU intensive). Plus, the M1 Pro and M1 Max chips are targeted at pros. That’s one of the main reasons I bought a spec’d out 2019 16-inch MacBook Pro, so I could edit videos without lag. So the machine I’m using has to be fast at rendering and exporting. I make YouTube videos and educational videos teaching machine learning. Experiment 1: Final Cut Pro Export (small and large video) The specs here focus on the MacBook Pro’s, Intel-based, M1, M1 Pro, M1 Max.ĭifferent hardware specs of Mac models in testing.įor each test, all MacBook Pro’s were running macOS Monterey 12.0.1 and were plugged into power. I currently use an Intel-based MacBook Pro 16-inch as my main machine (almost always plugged in) with a 2020 13-inch M1 MacBook Pro as a take-with-me-places option.Īnd for training larger machine learning models, I use Google Colab, Google Cloud GPUs or SSH (connect via the internet) to a dedicated deep learning PC with a TITAN RTX GPU.įor the TensorFlow code tests, I’ve included comparisons with Google Colab and the TITAN RTX GPU. For design, inputs, outputs, battery life, there’s plenty of other resources out there. This article is strictly focused on performance. So how do the new M1 Pro and M1 Max chips go with transfer learning using TensorFlow code? Food101 EfficientNetB0 feature extraction with tensorflow-macos - I rarely train machine learning models from scratch.CIFAR10 TinyVGG Image Classification with TensorFlow (via tensorflow-macos ) – Thanks to tensorflow-metal, you can now leverage your MacBook's internal GPU to speed up machine learning model training.CreateML Image Classification Machine Learning Model Creation - How fast can the various MacBook Pro’s turn 10,000 images into an image classification model with CreateML?.Final Cut Pro Export - How fast can the various MacBook Pro’s export a 4-hour long TensorFlow instructional video (I make coding education videos) and a 10-minute long story video (using H.264 and ProRes encodings)?.

I’m typing this on a MacBook Pro.Īnd being the tech nerd I am, when Apple released a couple of new MacBook Pro’s with upgraded hardware: M1 Pro, M1 Max chips and redesigns and all the rest, I decided, I better test them out.įor context, I make videos on machine learning, write machine learning code and teach machine learning.Ĭomparing Apple’s M1, M1 Pro and M1 Max chips against each other and a few other chips. The main keyboard I used is attached to a MacBook Pro. The trademark green charging light is back.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed